Oculus / 6DoF Meta-control framework

WHy

Lead the design for platform components that are specifically focused on 6DoF interaction and spatial interfaces.

how

3D and 2D design

Platform OS design

Art direction

Object Hierarchy

Rift 2.0 started with a single object focus, but as we grow the OS to host user generated content, we need to construct more holistic object hierarchy (similar to a filing system).

Interaction pre-viz and prototype

We have a pretty good insight into traditional ways of navigating through object hierarchies (e.g. tabs, breadcrumbs and etc) and some of them are adopted in our VR ecosystems. So Kenny and I looked into ways to express hierarchy in a more gestural 6DoF native approach.

Hypothesis

We can leverage proximity and proprioception to evaluate a gesture to understand if the user wants to see detail or abstract.

Parameters

Detect the intent to traverse hierarchy

Evaluate thresholds of push and pull to enter and exit info layer

Understand vfx, sfx and haptic affordance to guide manipulation

Calibrate how to have gestural fluidity (being able to complete multiple intent in one go)

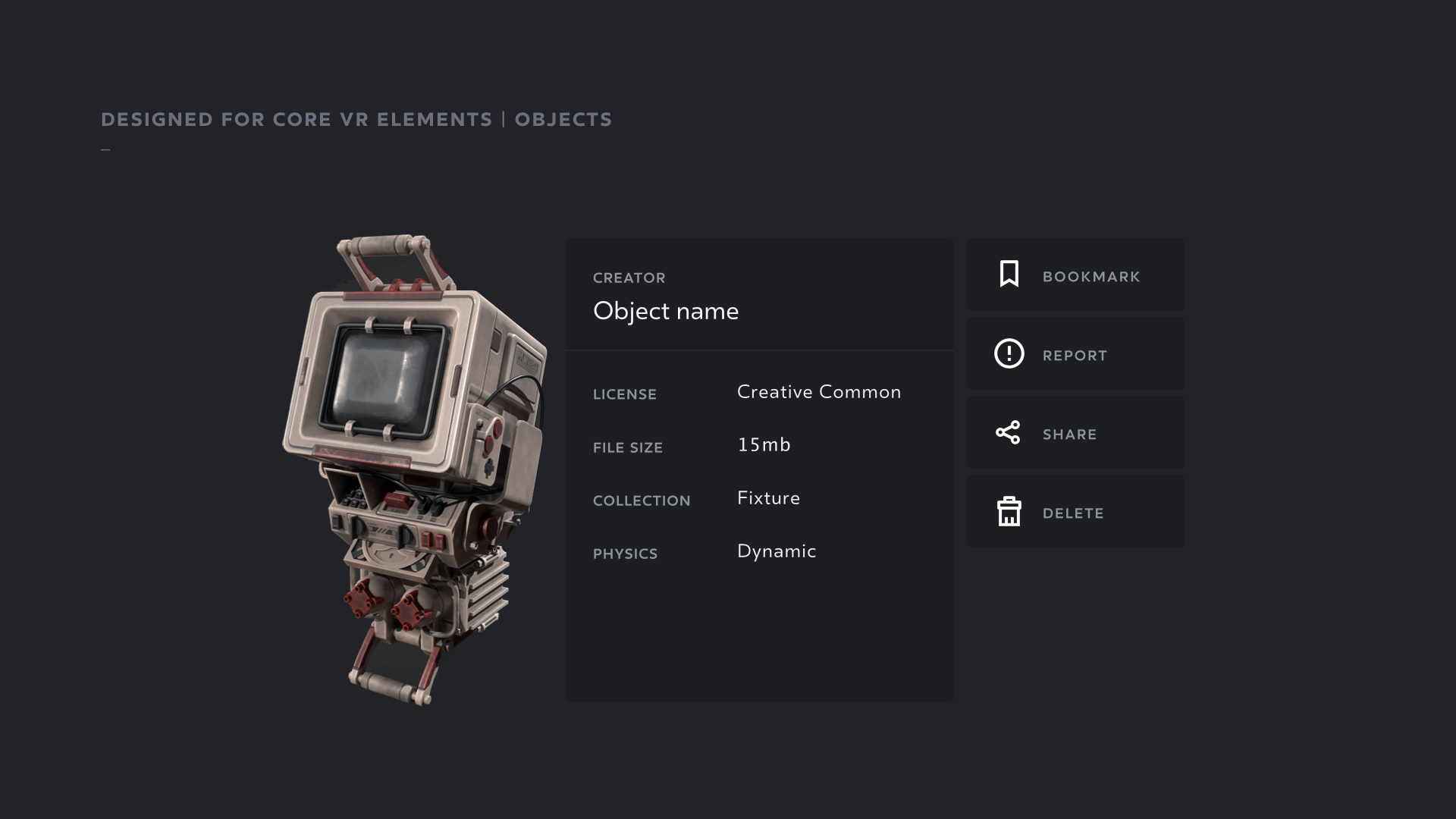

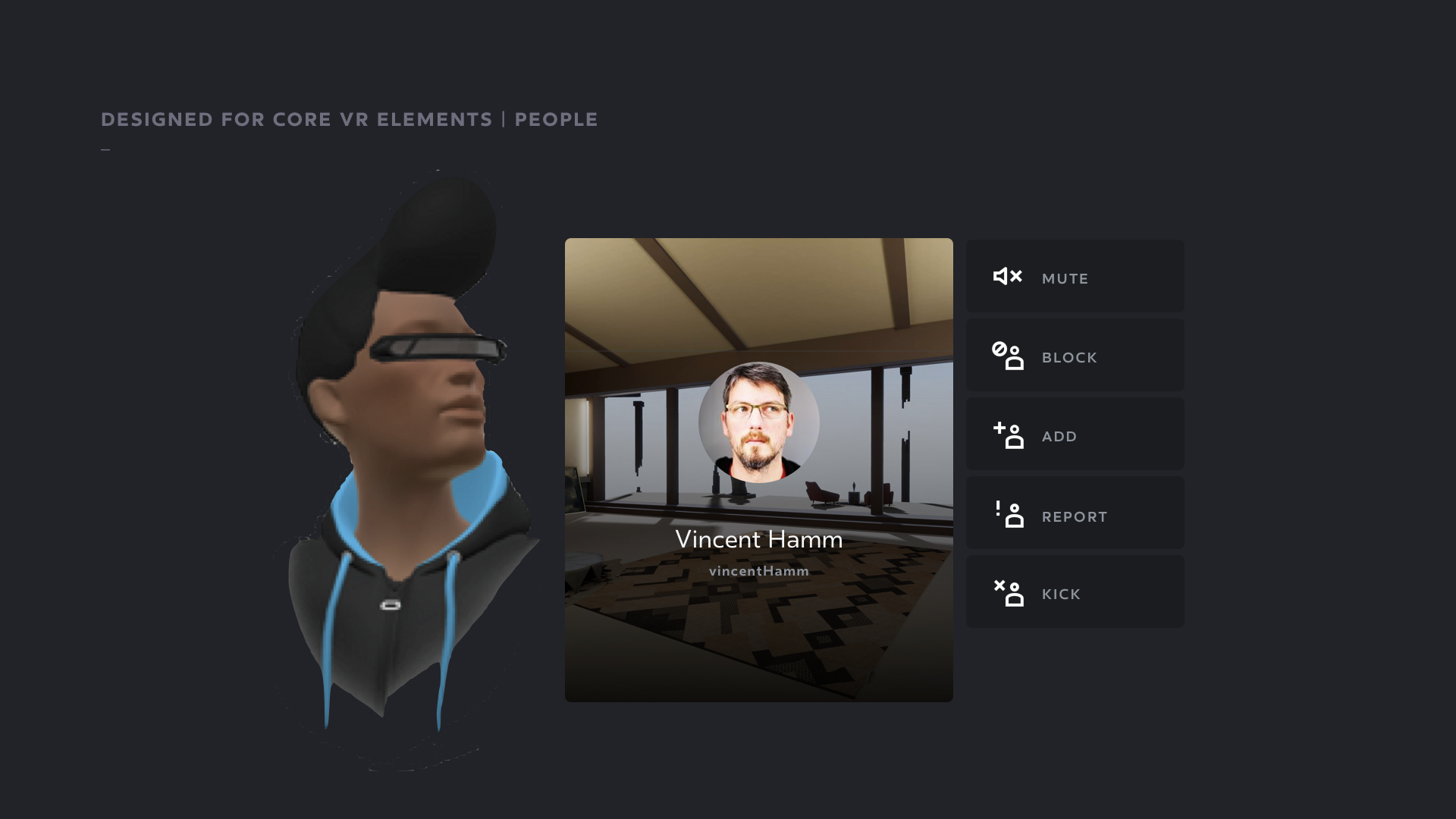

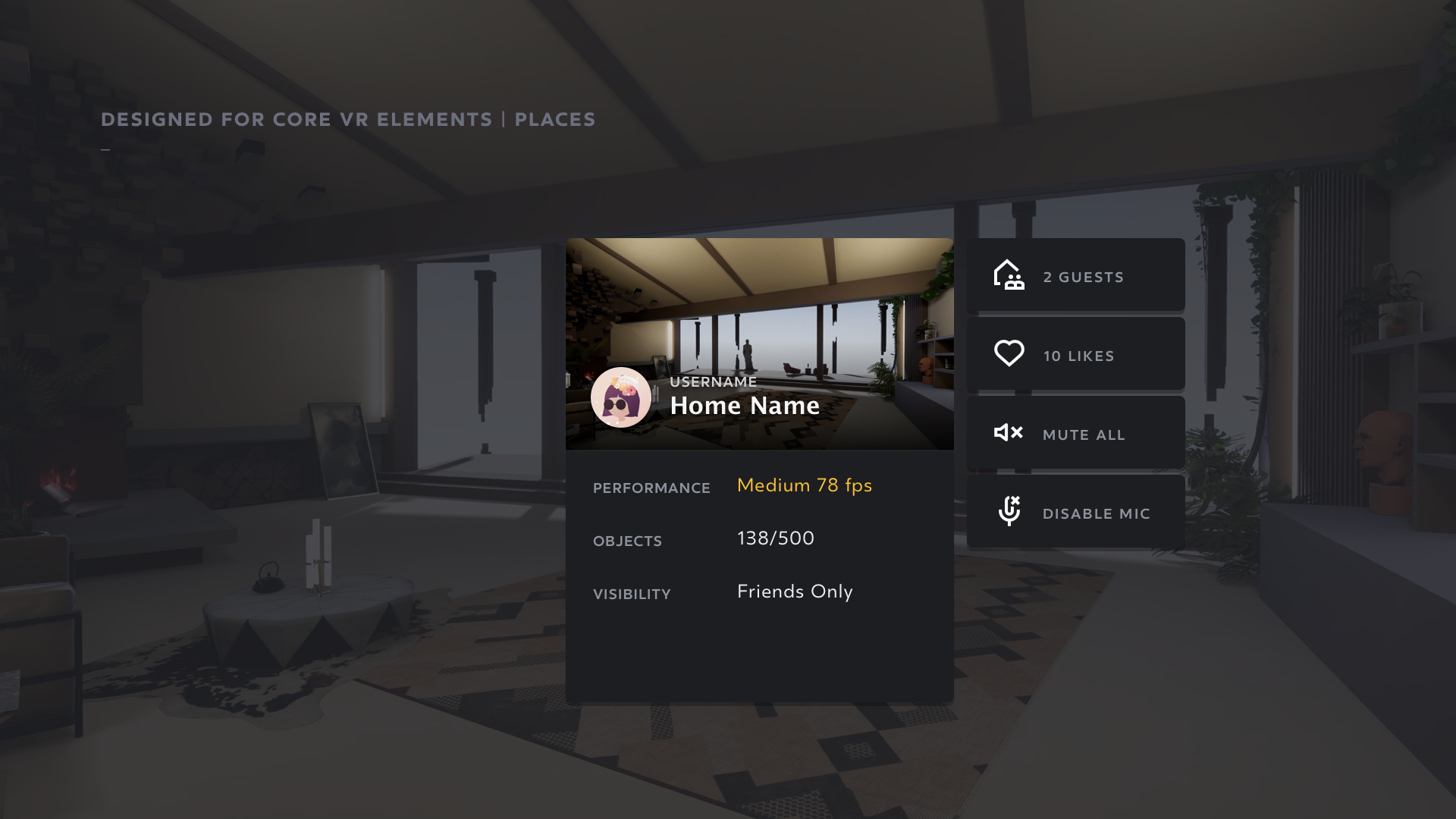

Context Menu / 2D info

As we work towards an OS system, we need to:

expose metadata (platform or user imported)

access platform level features (e.g. share, like and etc)

Interaction Pre Viz + Prototype

Together Kenny (prototyper), we validated a number of gestural efficiency parameters:

Accessibility

Ambidextrous (grabbing the card on either side will flip the buttons to the opposite side)

Single hand operable (gesture to summon/dismiss and interacting with buttons)

Precision

threshold to activate and dismiss

minimising conflicts (particularly with social gestures)

target precision for a range of target size

respect the spatial relationship between target and card

Ergonomics

optimise for wrist movements over shoulder rotation

lazy mode

Visual design components

The initial proposal supports the 3 core elements in our VR ecosystem:

Objects (imported or built-in)

People (avatars)

Environments (spaces or applications)